The Next Layer of Enterprise Data Architecture

The semantic layer has long been a critical component of enterprise data architecture. It is where technical data structures are translated into business meaning—where numbers, strings, lists, tables, schemas, and pipelines become customers, orders, policies, and operations.

Despite its importance, most organizations struggle to build and maintain semantic layers at scale. Enterprise data is distributed across systems with inconsistent structures, naming, and embedded logic.

Maintaining a consistent, accurate representation across environments has required significant manual effort.

Data virtualization addresses part of this problem by providing a unified logical access layer across distributed systems. It creates a consistent surface through which data can be observed and accessed without physical consolidation.

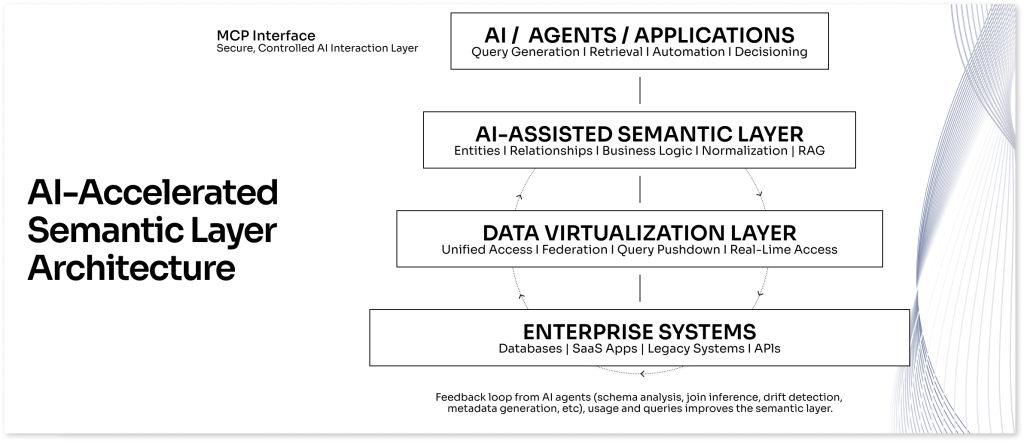

WHAT HAS CHANGED IS THE INTRODUCTION OF AI AS AN ACCELERATOR.

Applied to a virtualized environment, AI can analyze schemas, identify relationships, and accelerate the creation and maintenance of semantic models. Instead of building semantic layers entirely by hand, teams can develop and refine them continuously as systems evolve.

The result is a shift from static models to AI-assisted semantic layers—making it practical to maintain enterprise context at scale.

WHERE THE MAGIC HAPPENS: THE SEMANTIC LAYER

The semantic layer is where enterprise data becomes usable. It defines how distributed structures are translated into consistent business entities across systems, teams, and applications.

Instead of working directly with numbers, strings, lists, tables, schemas and joins, users and systems operate on shared definitions:

The semantic layer is where enterprise data becomes usable. It defines how distributed structures are translated into consistent business entities across systems, teams, and applications.

Instead of working directly with numbers, strings, lists, tables, schemas and joins, users and systems operate on shared definitions:

- customers

- policies

- orders

- services

- assets

- operational events

Underneath, the semantic layer encodes how data is constructed—how tables are joined, how entities are resolved across systems, how fields are normalized, and how business rules are applied.

In practice, the semantic layer provides:

- standardized entity definitions

- reusable join logic and transformations

- alignment between operational systems and analytics

- a consistent interface for AI systems

When well defined, it becomes the convergence point between data architecture and AI. Systems operate on shared definitions instead of fragmented schemas.

WHY SEMANTIC LAYERS HAVE HISTORICALLY BEEN DIFFICULT

The challenge is not defining a model once—it is keeping it aligned as systems evolve.

Enterprise data changes continuously. Schemas shift, fields are renamed, systems are added, and ownership moves across teams.

Most semantic layers are built manually—architects defining entities, mapping joins, and encoding logic. These definitions are tightly coupled to the current state of systems.

As systems change, the semantic layer drifts.

In practice, this leads to:

- diverging entity definitions across teams

- duplicated and inconsistent join logic

- models that lag behind production systems

- documentation that no longer reflects reality

Most semantic layers don’t fail—they decay.

Teams eventually fall back to raw schemas or rebuild logic independently. The issue is not the concept—it is the difficulty of maintaining it at scale.

WHY DATA VIRTUALIZATION CHANGES THE FOUNDATION

The problem is amplified by fragmented data across multiple systems that cannot easily be analyzed together.

Data virtualization creates a unified logical access layer across these systems. Instead of moving data, it exposes a consistent interface to access and analyze structures in place.

This changes how semantic modeling can be approached.

With a unified access layer:

- entities can be defined across systems

- relationships can be observed without pipelines

- schema differences can be analyzed in context

- data can be accessed dynamically for validation

Data virtualization does not create the semantic layer, but it makes enterprise-wide modeling possible.

It establishes the foundation—but not the mechanism—for maintaining semantic consistency.

WHY AI CHANGES THE EQUATION

Even with unified access, semantic modeling has required significant manual effort.

AI accelerates this process.

In a virtualized environment, AI can analyze schemas, metadata, and usage patterns across systems. It can propose entities, suggest relationships, and generate initial semantic structures.

In practice, AI-assisted modeling can:

- identify overlapping entities

- suggest join paths

- detect inconsistencies

- generate documentation

- map technical fields to business concepts

AI does not replace architects. It reduces effort and helps maintain alignment as systems change.

The combination is what matters:

- data virtualization provides the unified surface

- AI accelerates model creation and maintenance

Together, they make semantic layers sustainable.

HOW THIS WORKS IN PRACTICE

Building a semantic layer is an iterative process shaped by system complexity and scope.

Teams typically start with a defined subset—such as a business unit or group of systems—and expand over time.

The data virtualization layer provides a consistent surface to observe schemas and relationships without moving data.

From there, teams—often with technology integrators—develop an initial semantic model. Simple environments may involve one system; complex ones require reconciling multiple systems with inconsistent structures.

AI-assisted workflows accelerate this process.

In practice, this includes:

- identifying core entities

- analyzing schema inconsistencies

- proposing relationships and joins

- mapping fields to business definitions

- validating against real data

- refining continuously

The result is a continuously evolving semantic layer—not a one-time model.

FROM STATIC MODELS TO LIVING CONTEXT

Metadata provides structural understanding, but enterprise AI also requires a semantic layer that expresses data in terms of business meaning.

Within this layer:

- technical schemas map to business terminology

- relationships between entities are clearly defined

- business rules and domain logic are embedded in the data model

Because the virtualization layer exposes a coherent view of enterprise systems, LLMs can assist in building and maintaining this semantic environment.

CONTROLLED INTERACTION WITH ENTERPRISE SYSTEMS

Traditional semantic layers are static and degrade as systems change.

AI-assisted approaches enable continuous refinement.

Changes in schemas, relationships, and usage can be incorporated into the semantic layer as they occur.

In practice, teams can:

- detect schema changes

- surface cross-system relationships

- identify drift

- update metadata and documentation

The result is a shift from static models to living context—aligned with how systems actually operate.

IMPLICATIONS FOR ENTERPRISE AI

As organizations move to production AI, the limiting factor is not model capability—it is data understanding.

Without a semantic layer, AI operates on fragmented schemas and inconsistent definitions, leading to unreliable outputs.

With a semantic layer:

- queries are more accurate

- RAG retrieval improves through entity-level context

- agents operate across systems

- manual translation is reduced

The semantic layer becomes the interface between AI systems and enterprise data.

CONCLUSION

The semantic layer has long been essential—but difficult to maintain at scale.

Data virtualization provides unified access across systems.

AI accelerates semantic model creation and maintenance.

The combination is what matters:

- data virtualization enables cross-system access

- AI enables scalable semantic modeling

- the semantic layer provides context for enterprise AI

For technology integrators and enterprise teams, this results in:

- faster understanding of complex environments

- lower delivery risk

- faster development of AI-enabled capabilities

- improved consistency across systems

This is not an incremental improvement—it is a shift in how enterprise data is structured for AI.

About Accur8

Accur8 provides an upstream data platform for complex enterprise environments. For more than 12 years, Accur8 has helped enterprises and technology integrators understand long-lived system landscapes and move data reliably across evolving environments.

By combining data virtualization with AI-enhanced discovery, Accur8 enables organizations to maintain correlation across systems and deliver trusted data to downstream platforms and applications.